Best Laptops for Ollama in 2026: Run Local LLMs Without the Cloud

Best laptops for running Ollama and local LLMs in 2026. From Llama to Mistral to DeepSeek — here's the hardware you need for fast local AI inference.

Ollama has made running large language models locally as simple as a single terminal command. Pull a model, run it, and you have a local AI that works without internet, without API keys, and without sending a single line of your code to the cloud. Developers are using Ollama to power everything from Continue.dev and Cline to custom AI pipelines.

But local LLM inference is the most hardware-dependent task in modern development. A 7B parameter model needs at least 8GB of RAM dedicated just to model weights. A 13B model needs 16GB. Running Llama 3 70B or DeepSeek Coder 33B — the models that actually rival cloud APIs in quality — requires 32GB or more. And that is before your IDE, dev server, and browser claim their share.

The other critical factor is speed. Inference on CPU is usable but slow — maybe 10 tokens per second on a good machine. Apple Silicon's unified memory architecture or a laptop with a discrete GPU can push that to 30-50+ tokens per second, which is the difference between a useful coding assistant and a frustrating one.

These laptops are ranked by what actually matters for local LLM performance.

Top Picks for Ollama

— skip ahead or keep reading for the full breakdown

- #1

ASUS ROG Strix G16 (RTX 5060)

Best Dedicated GPU

$1,259See Today's Price → - #2

MacBook Pro 16" (M4 Max)

Best Unified Memory for AI

$3,422See Today's Price → - #3

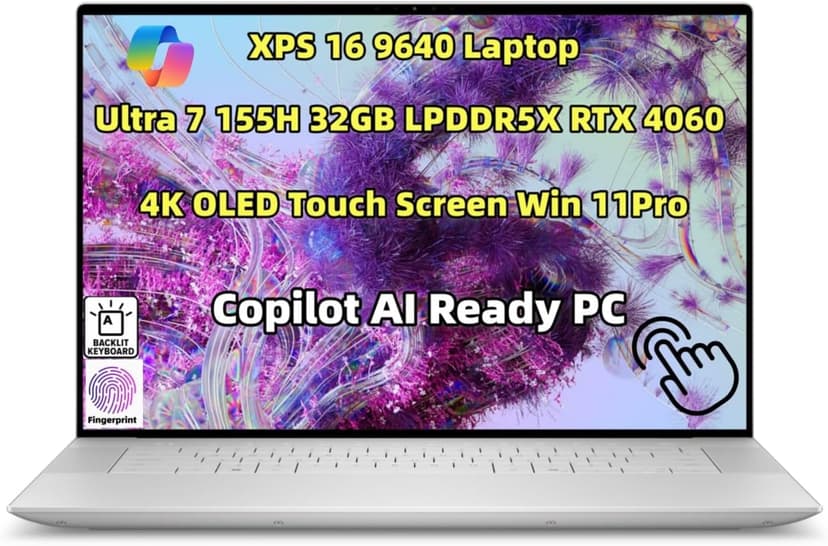

Dell XPS 16 (9640)

Best Windows Workstation

$2,749See Today's Price →

The Specs That Actually Matter

RAM: The Single Most Important Spec

Minimum: 16GB. Recommended: 32GB. Ideal: 64GB.

This is not negotiable. Modern development with Ollama is RAM-hungry:

- Your IDE: 1–3GB

- AI coding assistant (Claude Code, Cursor): 2–4GB

- Browser with dev tools open: 2–6GB

- Node.js dev server: 1–2GB

- OS and background processes: 3–4GB

That is 9–19GB just for a basic setup. With 16GB, you are already swapping to disk. With 32GB, you have headroom. With 64GB, you can run local models alongside everything else.

Bottom line: 16GB works but you will feel the ceiling. 32GB is the sweet spot. 64GB is future-proof.

CPU: Multi-Core Performance Wins

AI coding tools, TypeScript compilation, and dev servers all benefit from multi-core performance. You want:

- Apple Silicon (M3/M4 series): Best performance-per-watt, excellent for sustained workloads

- AMD Ryzen 9 / Intel Core Ultra 9: Strong multi-threaded performance on Windows/Linux

- Avoid: Anything below 8 cores in 2026

Display: You Need Screen Real Estate

Working with Ollama means having your editor, an AI chat panel, a browser preview, and maybe a terminal all visible simultaneously. A cramped screen kills the workflow.

- Minimum: 14 inches, 1920x1200

- Recommended: 16 inches, 2560x1600 or higher

- External monitor: Strongly recommended regardless of laptop screen size

Storage: NVMe SSD, 512GB Minimum

Fast storage speeds up everything — project loading, dependency installation, AI model caching. Get an NVMe SSD with at least 512GB. 1TB is better if you work on multiple projects or experiment with local models.

Battery Life: The Marathon Factor

Development sessions can last hours. AI assistants and dev servers are power-hungry. Look for laptops that deliver 6+ hours of real development use, not the manufacturer's optimistic "up to 20 hours of video playback" claims.

Get smarter about development.

I write about the tools, tactics, and frameworks that actually move the needle — delivered weekly. No spam, no fluff.

The Best Laptops for Ollama in 2026

ASUS ROG Strix G16 (RTX 5060)

$1,259

Pros

- RTX 5060 GPU — next-gen NVIDIA for ML and AI workloads

- 16-inch 165Hz display — great for coding and gaming

- Excellent price for dedicated GPU power at $1,259

- 16 cores / 24 threads for fast compilation and builds

- 4.5/5 rating with 376+ reviews — proven reliability

Cons

- 16GB RAM limits large model training

- Heavier at 5.8 lbs — not ultraportable

Best for: Machine learning engineers, data scientists, and anyone who needs dedicated GPU power for local model training or AI image generation.

See Today's Price on Amazon

MacBook Pro 16" (M4 Max)

$3,422

Pros

- 48GB or 128GB unified memory — no bottlenecks

- Up to 16 CPU cores handles everything

- Exceptional battery life for a pro machine

- Silent under load — fans rarely spin up

- Best-in-class Liquid Retina XDR display

Cons

- Expensive — starts at $3,422

- Overkill if you only do web development

Best for: Professional developers and founders who want the best experience and can justify the investment.

See Today's Price on Amazon

Dell XPS 16 (9640)

$2,749

Pros

- Stunning 4K OLED touchscreen display

- 32GB LPDDR5x RAM standard

- NVIDIA RTX 4060 GPU for ML workloads

- Thunderbolt 4 and WiFi 7 connectivity

Cons

- Premium price at $2,749

- Shorter battery life than MacBooks

Best for: Windows developers, ML engineers, and anyone who needs a dedicated GPU alongside serious coding power.

See Today's Price on Amazon

MacBook Pro 14" (M4 Pro)

$1,799

Pros

- Perfect balance of power and portability at 3.5 lbs

- M4 Pro with 12-core CPU — serious workstation performance

- Liquid Retina XDR display with ProMotion

- Outstanding battery life for a Pro machine

- Three Thunderbolt 4 ports plus HDMI and SD card

Cons

- Still expensive at $1,799+

- 14-inch screen can feel cramped for multi-pane coding

Best for: Developers who want Pro performance in a more portable package — the sweet spot for most professionals.

See Today's Price on Amazon

Lenovo ThinkPad P16s Gen 3

$2,299

Pros

- Up to 96GB DDR5 RAM — run large local AI models

- Workstation-grade CPU for heavy workloads

- OLED display option available

- MIL-STD-810H durability — built to last

- Excellent Linux support — ThinkPad gold standard

Cons

- Heavier than MacBook Air alternatives

- Battery life shorter under heavy AI workloads

Best for: AI researchers, developers experimenting with local models, and ThinkPad enthusiasts.

See Today's Price on AmazonQuick Comparison

| Laptop | RAM | Cores | Screen | Battery | Price | Rating | Link |

|---|---|---|---|---|---|---|---|

| ASUS ROG Strix G16 (RTX 5060) | 16GB | 16 cores / 24 threads | 16" 1920x1200 165Hz | 3–5 hrs dev use | $1,259 | 4.5/5 | See Price |

| MacBook Pro 16" (M4 Max) | 48–128GB | 14–16 cores | 16.2" 3456x2234 | 6–8 hrs dev use | $3,422 | 4.6/5 | See Price |

| Dell XPS 16 (9640) | 32GB | 16 cores | 16.3" 3840x2400 OLED | 5–7 hrs dev use | $2,749 | 4.9/5 | See Price |

| MacBook Pro 14" (M4 Pro) | 24GB | 12 cores | 14.2" 3024x1964 | 7–9 hrs dev use | $1,799 | 4.8/5 | See Price |

| Lenovo ThinkPad P16s Gen 3 | Up to 96GB | 16 cores | 16" 3840x2400 OLED | 5–7 hrs dev use | $2,299 | 4.5/5 | See Price |

My Recommendation

If you are serious about Ollama and can afford it: get the ASUS ROG Strix G16 (RTX 5060). It earned the # 1 spot for a reason — it is the best machine for this specific workflow.

If you want the best balance of price and performance: the MacBook Pro 16" (M4 Max) (best unified memory for ai) gives you the most value without major compromises.

Also worth considering: the Dell XPS 16 (9640) — best windows workstation in this category, and a strong pick if the top two do not fit your needs.

The common thread: do not skimp on RAM. Everything else — CPU speed, screen resolution, storage — is secondary. RAM is the bottleneck that turns Ollama from a flow state into a frustration.

Join 1,000+ developers building smarter.

David's Blueprint covers coding workflows, startup strategy, and the frameworks that actually work — delivered to your inbox every week.

Have a laptop recommendation I missed? Reply to the newsletter and let me know — I update this guide regularly.

Related Guides

I use Beehiiv for my newsletter - it's the most frictionless platform I've found for growth. If you're starting your own, this referral link gets you 20% off your first 3 months.